- ANFP TV

- Edge: Navigating Digital Dilemmas: AI Use in Food Service

Edge: Navigating Digital Dilemmas: AI Use in Food Service

By Chrissy Carroll, MPH, RD

December 3, 2025

This Ethics Connection CE article appeared in the November/December 2025 issue of Nutrition & Foodservice Edge magazine. To view a PDF of this article click HERE.

To earn 1.0 ETH CE credit, purchase the CE article in the ANFP Marketplace HERE or click the button below and complete the quiz.

This course is a level II continuing competence. View continuing competence level descriptions HERE.

Navigating Digital Dilemmas: AI Use in Food Service

By Chrissy Carroll, MPH, RD

TECHNOLOGY IS AN INTEGRAL PART OF FOODSERVICE MANAGEMENT. From electronic medical records to menu management software, digital tools are used in many aspects of your role as a CDM, CFPP. The introduction of artificial intelligence (AI) into software, as well as new AI-specific tools, creates additional ethical considerations. Foodservice managers must balance the benefits of these tools with ethical decision making and regulatory compliance.

Below is a deep dive into four key ethical issues, including the relevant CDM, CFPP Code of Ethics principles and guidance on how foodservice managers can navigate these issues.

DIGITAL DILEMMA #1: BIAS

Relevant CDM, CFPP Code of Ethics Principles: 1, 2, 3, 14

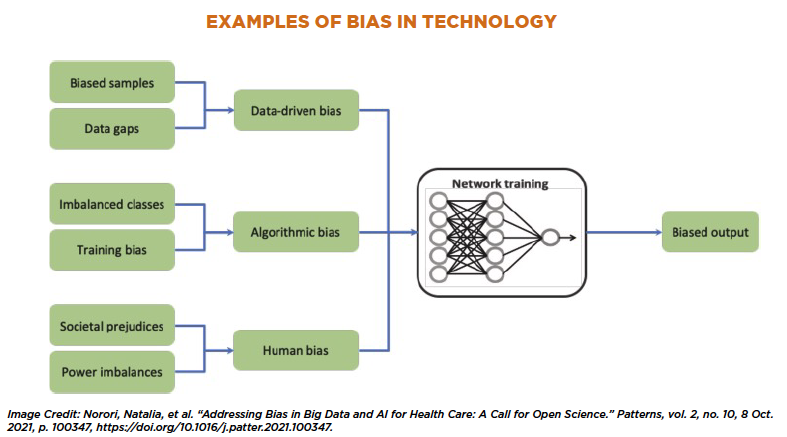

AI tools are trained on large datasets, including those geared towards clinical healthcare practice. Unfortunately, this training can include biased information. An article in Patterns describes that bias can take place in two predominant ways: statistically and socially. Statistical bias occurs when data does not reflect the true makeup of a population. Social bias occurs when there are systemic inequities that lead to disadvantages or unfair outcomes for particular groups. Both can affect AI tools.

For example, many demographic groups have been underrepresented in medical research, meaning that training data for an AI healthcare tool may not represent the variability in the population. This can lead to biased outputs and improper decision making.

Similarly, free generative artificial intelligence tools are trained on large portions of the Internet. As one might expect, some of this information contains harmful rhetoric in the form of social bias against certain groups. While developers attempt to control for this, there is still the possibility of prejudiced responses.

Additional forms of bias in AI can include data collection systems that are affected by social bias, poor design processes that perpetuate biases, and human prejudice by developers.

Navigating bias in these tools as an end user requires careful consideration of the tool and its outputs. Before using technology that incorporates AI, consider researching how the tool was trained and if the dataset is representative of your population (i.e., older adults, students, cultural backgrounds, etc.).

For example, if an AI-based menu creation tool was predominantly trained on Midwest regional menus, but your long-term care facility serves mostly Haitian immigrants – it’s likely that the outputs are not going to account for cultural food preferences.

When using AI tools, be sure to regularly review outputs for any biases. Consider developing standard operating procedures that allow staff to override AI output in these situations, ensuring that their training explicitly covers when and how to do so.

DIGITAL DILEMMA #2: HIPAA

Relevant CDM, CFPP Code of Ethics Principle: 8

If you’re working in any type of healthcare system, you’re likely familiar with the importance of the Health Insurance Portability and Accountability Act (HIPAA), which safeguards protected health information (PHI). According to the HHS (U.S. Department of Health and Human Services), PHI includes all “individually identifiable health information.” This could be a combination of common identifiers (name, address, etc.), demographic information, medical conditions, provision of healthcare services, or payment information, with a reasonable basis to believe the information could be used to identify the person.

Is it ethical to use this PHI within AI tools? The answer is a complex “it depends.”

PHI should never be entered into tools that are not specifically designed to be HIPAA compliant for health care. For example, a client’s medical record should never be uploaded to ChatGPT or Gemini for clinical decision making or menu guidance. Some public AI tools utilize the information you enter for training purposes, which is not permitted with PHI.

However, there are AI tools that are specifically designed to be HIPAA compliant for health care. For example, AI charting tools record and summarize client sessions, helping practitioners with quicker and more complete documentation. As a CDM, CFPP, these may be used in your work with dietary preference interviews or nutrition screenings. Clearly, these tools are exposed to PHI during the recording process and subsequent development of a chart note draft.

If this type of technology is used in a way that involves PHI, the tools should always be HIPAA compliant, and the organization should enter into a Business Associate Agreement covering that use. As an end user, it would be important to also understand how these tools handle the data, any recordings, and encryption protocols to ensure they are up to par. Typically, your organization at large and their legal team would be handling this, rather than you as a foodservice manager, but it’s helpful to understand for reference.

Free public AI tools can still be valuable for foodservice managers to use for general questions or with de-identified health information. HHS describes this as information that does not directly identify or give a reasonable way to identify the individual. All potential identifiers must be removed and there should be no way that the information which is left could identify an individual.

Here’s a helpful example of improper versus acceptable use with de-identified information:

- Unacceptable public AI use: “Mrs. Jones in room 214 has a corn allergy. I’m uploading my menu for Sunshine Care for the week. What needs to be modified?”

- Acceptable public AI use: “If someone has a corn allergy, what are some common menu items and ingredients that they would need to avoid?”

The first example gives several identifiers that easily pinpoint the patient (name, room number, and facility name). The second example strips the identifiers and asks a general question that could then be used to help evaluate the menu, with no way to identify the patient.

There is a lot of legal nuance here, so the best advice is to always be cautious about what is entered into non-HIPAA compliant AI tools. Staff should also receive training in this regard; they may know that discussing a patient’s medical conditions in public spaces is unacceptable, but may not realize chatting with a public AI tool falls under the same guidelines.

DIGITAL DILEMMA #3: EVIDENCE-BASED GUIDELINES

Relevant CDM, CFPP Code of Ethics Principles: 1, 6, 7

Evidence-based practice is a core tenet in health care. We want to take the best available research, clinical expertise, experience, and resident rights – and combine that into the decision-making frameworks we use. AI technology can be useful in guiding certain parts of work but should never be responsible for final decisions.

A primary issue that occurs with AI tools is the idea of “hallucinations.” These occur when the tool makes up an answer that is incorrect, often with a high level of confidence. As a silly example, in 2024, news articles arose when a popular AI tool told users (with conviction!) that there were only two “R’s” in the word “strawberry.” One user even asked, “Would you bet a million dollars on this?” – and the AI tool replied, “Yes I would.”

While the above example is trivial, hallucinations or incorrect answers can occur in any potential output. Unfortunately, these errors in a work setting could affect patient health and satisfaction, departmental menus or budgets, or even a facility’s compliance to regulatory requirements. As such, outputs must be carefully monitored and adjusted.

For example, let’s say you want to incorporate a new recipe into your menu, based on one you’ve prepared at home. You use AI to expand the family-sized recipe to one that is appropriate for making 50 servings and to create an inventory list for the recipe. While much of the list is accurate, the AI tool has mistakenly calculated that you need 25 cups of olive oil to prepare the recipe – a far cry from the approximate 1.5 cups you actually need. Clearly, this could lead to unnecessary purchases if you didn’t catch the error.

Similarly, perhaps you are conducting initial nutrition screenings for new residents. Your facility allows use of an AI charting tool to speed up time in documenting this information in the medical record. However, you notice that the AI tool has made some inappropriate recommendations for weight loss in the draft chart note. You must edit the note prior to using it. Again, without oversight, this could lead to improper patient care.

The key takeaway: Always use your ethical and nuanced experience and expertise to make client-facing decisions. Technology should provide support, not substitution, for your managerial choices.

DIGITAL DILEMMA #4: CONFLICT WITH ORGANIZATIONAL VALUES

Relevant CDM, CFPP Code of Ethics Principles: 1, 6, 10, 14

The last consideration is whether AI use aligns with your organization’s values – or how to modify its use so that it can. Here are some potential values that could clash with AI use, and suggestions for tackling each.

Sustainability: Your organization has been working hard to reduce food waste, source local produce, and manage resources. AI can help with these in some regards, such as optimizing menus for waste reduction. However, AI use can also conflict with this value, as it requires significant environmental resources to power its use. Electricity is needed to power the infrastructure, water is used to cool the hardware, and these tools contribute to carbon emissions. For example, some researchers note that the energy consumption of AI could be as high as the daily electricity demand of 1.5 million homes.

Strategies to address sustainability concerns:

- Use AI tools which prove helpful in meeting other sustainability goals and eliminate tools if they are not creating meaningful change.

- Avoid AI use if traditional non-AI technologies (which are less resource-hungry) are equally effective.

- Use specific, to-the-point prompts when guiding AI tools. Avoid superfluous language, as it takes resources for the AI tool to process each word.

Resident rights: You want to ensure all residents retain their autonomy and are treated with dignity and respect. AI can be helpful, such as brainstorming culturally-appropriate meals, working on menu adaptations for allergies, or allowing for a more focused resident interaction knowing your AI charting tool is capturing the screening information via recordings. However, it can also pose risks to this value if transparency and proper oversight are not used. For example, not all residents may be comfortable with the use of an AI charting tool.

Strategies to address resident rights concerns:

- Obtain informed consent for AI tools used during client interactions, like AI charting assistants. Allow residents to opt-out if desired.

- Be cautious around entering PHI into certain AI tools, as previously discussed, to avoid violating a resident’s right to privacy.

- Remember that AI tools can create generalizations, which may not be accurate for every individual.

- Engage residents in AI-generated ideas, like doing a taste testing of a new menu item or getting feedback on special event ideas during resident council meetings.

Community: You strive to lead your department in a way that promotes teamwork and creates a sense of community with your clients. AI may support this goal by generating creative in-service ideas, team-building exercises, or special events to foster connection among those you serve. However, AI can also undermine this value by generating an overreliance on technology, a focus on standardization over personalization, and a risk of minimizing personal touches and soft skills that are important in client-facing interactions. Clients may also feel devalued if they worry decisions about their health and food (the latter being quite tied to comfort and identity) are being made by a “robot.”

Strategies to address community concerns:

- Ensure transparency about AI tools and utilize them as a method of support, not as a replacement for human interaction.

- Leverage AI to create ideas for community connections. For example, you could create conversation starters to use on table tents at resident dinners, or you could develop themed meals for your school foodservice setting.

- Adapt AI outputs to fit cultural preferences, local traditions, and regional nuances.

- Engage staff and potentially clients in collaborative discussions around AI use.

- Avoid replacing human connection with fully automated systems. Automation can be useful in many areas, but there should always be some aspects of human-centered interaction in the foodservice setting.

FINAL THOUGHTS

As AI becomes increasingly embedded in daily operations, foodservice managers will face new ethical dilemmas. The CDM, CFPP must adequately consider biases, HIPAA compliance, and alignment with organizational values. They must also ensure appropriate input to tools, monitor for proper output, and train staff on these nuanced issues. This can ensure that technology enhances the operation without putting clients, the department, or the facility at risk.

About the Author

Chrissy Carroll, MPH, RD

Chrissy Carroll is a registered dietitian, freelance writer, and brand consultant based in central Massachusetts.